What is High Availability?

When considering the architecture of business critical applications, common concerns include the ability to process large volumes of transactions and/or simultaneous client connections, but decreasing downtime and eliminating single points of failure are just as important. Organizations tend to look towards High Availability (HA) to address these latter concerns. High availability refers to systems or components that are operational without interruption for long periods of time. In essence, ‘high availability’ is a quality of infrastructure design.

High availability is measured as a percentage, with a 100% percent system indicating a service that experiences zero downtime. This would be a system that never fails or requires zero down time for maintenance activities. For instance, a system that guarantees 99% of availability in a period of one year can have up to 3.65 days of downtime (1%).

Eliminate Single Points of Failure

One of the foundations of high availability is eliminating single points of failure. A single point of failure is any component of the system which would cause service disruption if that individual component was to become unavailable. Therefore, any component that is required for the proper functionality of your application that does not have redundancy is considered a single point of failure.

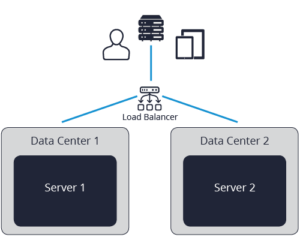

To eliminate single points of failure, each layer of your application stack must be prepared for redundancy. For example, suppose you have a website running on identical servers that sits behind a load balancer. Users hitting that website can be distributed equally between each server, and if one of the servers fails, then the load balancer can reroute traffic to the remaining server. In this scenario, we’ve eliminated the single point of failure on the server hosting the website.

However, what happens if the load balancer goes down? Or the data center that houses the load balancers goes offline due to a network outage? It is possible to navigate around this by an additional load balancer; however, this introduces other issues like the need for a Domain Name System (DNS) change should the new load balancer also fail.

High availability brings with it an increase in complexity. Building for high availability comes down to a series of trade-offs and sacrifices. Firstly, the business needs to understand and calculate the cost of the systems in question being unavailable (either for maintenance or for unexpected issues). This will provide a measured approach as to the complexity of the high availability solution needed. More on this later.

Itential and High Availability

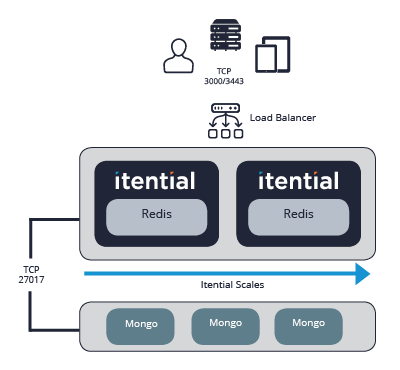

At its core, Itential consists of a stateless application server, a Redis in-memory key-value database that stores login tokens and a MongoDB database that persists data. In this simple form, each of these components act as a single point of failure. For example, if the server hosting MongoDB goes down, then the Itential application will become unavailable.

So, applying the concepts discussed above, we can eliminate a single point of failure by mirroring the application server, sticking a load balancer on top and adding some data replication to MongoDB. This is illustrated by the diagram below. In this example we’ve reduced the number of servers required by installing Redis on the same machine as the Itential application server. This works because we can configure the load balancer to use sticky sessions, meaning that once a user has established a login, the load balancer will send them to the same server.

Now, let’s assume the application server goes offline, this is ok because the load balancer will direct the user to the remaining server. However, as the original server is no longer available, the session token used by the client session (stored in the Redis database) is no longer valid, meaning the user is prompted to login before they can continue with whatever they were doing.

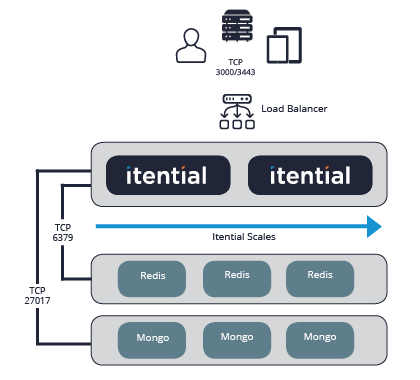

Just as before, we get around this login issue by adding data replication capabilities to Redis, using Redis sentinel. This will require 3 additional servers added to the solution architecture (as shown in the diagram below).

Now if an application server goes down, the user is unaware as the session tokens remain valid due to them being stored on a separate server. Similarly, if the Redis server goes down, because the data is replicated to secondary Redis databases, the user’s session remains uninterrupted as the Itential application server simply connects to the secondary Redis server.

As we can see, we are building up a more fault tolerant solution, but with that comes increased complexity.

Striking the Right Balance

In a perfect world, all complex applications would be available every minute of every day. However, in reality, no solution is perfect. Instead important decisions are required when it comes to the complexity/availability trade-off. As mentioned earlier, the right HA solution really depends on the business needs of the systems. Whilst it’s easy for businesses to demand 99.999% uptime, without the right due diligence it is easy to architect an HA solution that is cost prohibitive.

To help guide these decisions, it is worth considering questions like:

- How will my users react if my application is down for 5 minutes? 10 minutes? 1 hour? 3 hours? 24 hours? Multiple days?

- What would be the business impact in relation to lost revenue?

- What will my users accept as maintenance intervals? Once a month? Once a year?

- Do we have the necessary skilled individuals to deploy and maintain such a solution?

- Will I get sufficient return on the investment of more infrastructure complexity?

- If our systems do go down, do we have a solid incident management process to handle incidents and communicate with users during downtime?

The answers to these questions will vary from company to company, and even department to department. And in almost all cases, compromises will need to be sought.

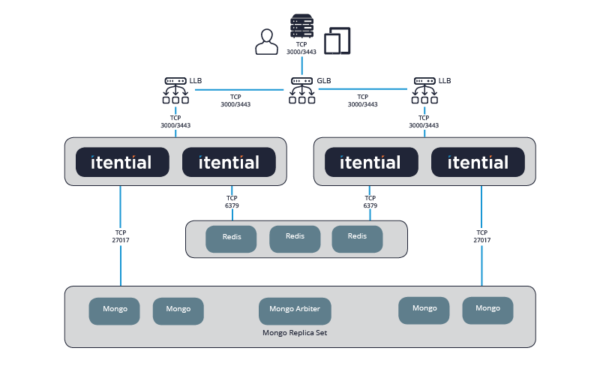

Flexibility to Grow

One of the advantages of Itential’s product architecture is the ability to scale the solution as its use increases within the organization without having to do massive ‘rip and replace’. Rip and replace methods could not only prove costly, but also very disruptive to the teams/services that rely on the solution. As you start to use Itential, its use might be limited to a single team within the organization, but as more and more use cases are added, it will become necessary to support more and more concurrent users and transactions. With its increased usage, the need for reduced downtime becomes a requirement.

Answers you provided to the questions above at the beginning of Itential’s use could be very different 2 years down the line. For example, at the beginning it might not have been a big issue for users to re-login if a server becomes unavailable, but that might become unacceptable to a wider user community.

Similarly, when first deployed, a single team could tolerate a few hours downtime during planned maintenance windows. However, when it’s used by multiple teams spread across different time zones, not only could the maintenance windows become smaller, but any downtime could severely impact business operations. In that case, a more robust blue/green architectures must be deployed.

The diagram above illustrates how Itential can scale without needing to throw everything out and start again. More application servers can be added to handle increased load. Redis can easily be migrated from standalone local database to clusters with data replication. Additional MongoDB servers can be inserted with ease and minimal disruption.

There’s no debating that eliminating a single point of failure when deploying websites or applications is crucial. However, careful consideration needs to be taken when deciding how far down the availability rabbit hole you’re prepared to go for that all-important uptime. Overengineering a solution, when your organization might not have the required resources to deploy and manage it, could cause more issues than it’s trying to solve.

If you’d like to take a deeper dive into the technologies that provide the foundation for Itential’s Automation Platform High Availability, you can download this technical whitepaper.